AI security statistics for 2026 show a sharp rise in AI-driven cyber threats, data breach costs, and enterprise risk exposure. Organizations now face increased phishing automation, deepfake fraud, prompt injection attacks, and generative AI data leaks.

At the same time, companies invest heavily in AI-powered defense, governance frameworks, and Zero Trust strategies to reduce risk and improve resilience.

This AI Security Statistics 2026 report highlights the latest data, threat trends, market growth, and regulatory developments shaping enterprise AI security.

AI Security Statistics 2026

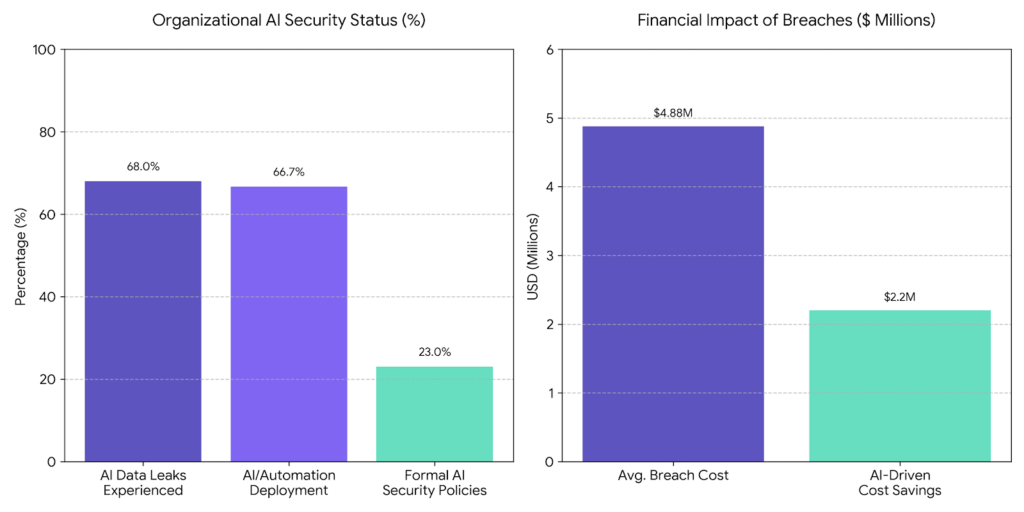

- The global average data breach cost reached $4.88 million (IBM Cost of a Data Breach Report) (IBM)

- Reported cybercrime losses exceeded $16.6 billion – A 33% increase from 2023’s $12.5 billion (FBI IC3 Annual Report).

- 68% of organizations have experienced data leaks linked to AI tool usage, yet only 23% have formal security policies in place (Metomic State of Data Security Report)

- AI-generated phishing emails show significantly higher engagement rates than traditional phishing; phishing attacks targeting financial institutions have surged 1,265% since 2022 (SlashNext State of Phishing Report).

- Two out of three organizations now deploy AI and automation across their SOC environments, with extensive use cutting breach costs by an average of $2.2 million (IBM Cost of a Data Breach Report)

- Prompt injection holds the #1 spot on the OWASP Top 10 for LLM Applications 2025, with supply chain vulnerabilities ranked #3 (OWASP, 2025)

AI Security Skills Gaps Among Security Professionals

Most security teams know CVEs, network intrusions, and endpoint defense. AI-specific attacks work differently, and almost no one has trained for them. Only 24% of enterprises have a dedicated AI security governance team. Prompt injection bypasses your entire stack.

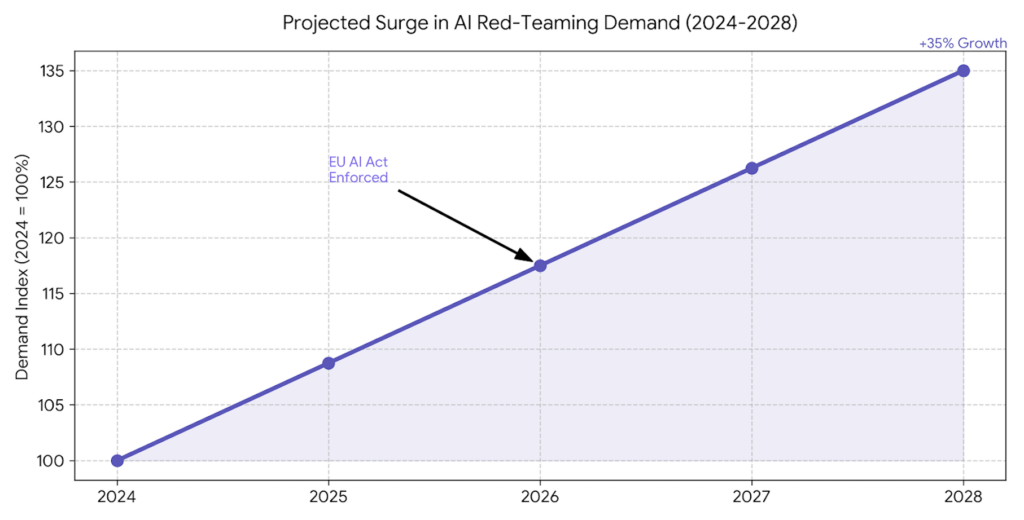

Shadow AI is already inside your org. AI red-teaming demand is projected to surge 35% by 2028, with almost no supply to meet it. The EU AI Act is enforced in August 2026. The skills gap is the threat.

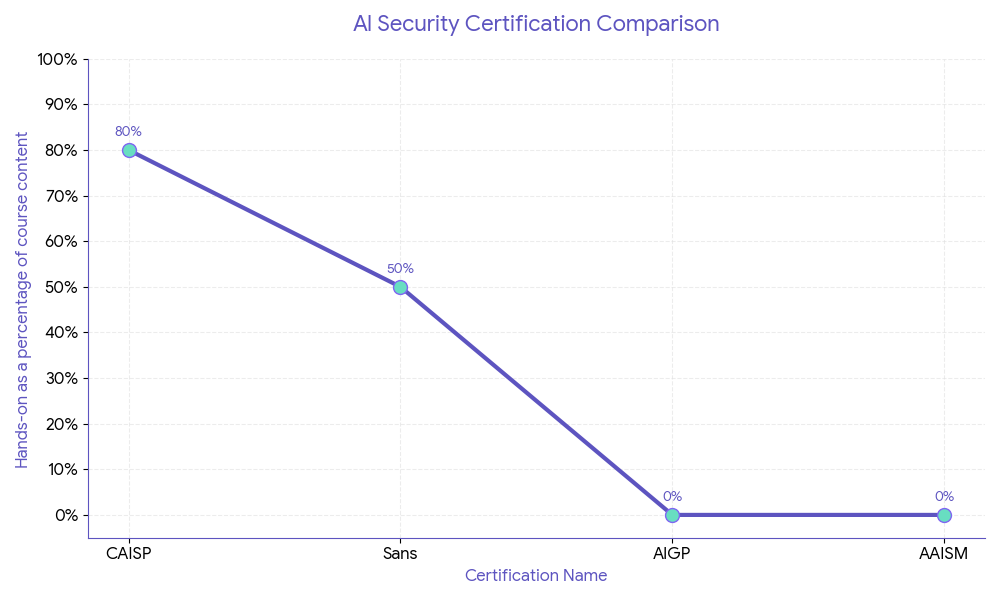

AI Security Certification Comparison

Every cert on this list solves one piece of the puzzle. AAISM and AAIA focus on management and audit. AIGP is built for policy and legal teams. SEC598 goes deep on automation but skips governance entirely.

The Certified AI Security Professional (CAISP) is the only one that covers the full stack. From identifying prompt injection in a live system to aligning with NIST AI RMF to leading an AI red team engagement. It’s mapped to the OWASP LLM Top 10 and current attack patterns like agentic AI exploitation.

Certified AI Security Professional

Secure AI systems: OWASP LLM Top 10, MITRE ATLAS & hands-on labs.

In 2026, it is significant for three concrete reasons:

- Job market breadth. A CAISP-certified professional can sit in a SOC, lead a red team engagement on an LLM, or brief a CISO on AI governance gaps. No other cert on this list gives you that range.

- Cost-to-value ratio. SANS SEC598 runs upward of $5,000–$8,000. CAISP delivers broader, more current AI security coverage at a discounted price.

- Built for the 2026 threat surface. CAISP’s curriculum is mapped directly to the OWASP LLM Top 10, NIST AI RMF, and current attack patterns like AI supply chain attacks. These aren’t afterthoughts. They’re the curriculum.

One cert locks you into a lane. CAISP puts you on the whole road. In 2026, that’s the difference between a professional who can flag an AI risk and one who can stop it, govern it, and brief the board on it.

Enterprise AI Security Risks

AI-driven threats are no longer theoretical; 77% of businesses reported an AI-related security incident in 2024, costing enterprises an average of $4.88 million per breach, the highest in history. (IBM Cost of a Data Breach Report)

Generative AI Data Leakage

Employees are unknowingly turning GenAI tools into data exfiltration channels; Samsung famously banned ChatGPT after engineers leaked proprietary source code, and a 2024 Cyberhaven study found that 11% of data employees paste into ChatGPT is confidential, exposing trade secrets, PII, and internal IP at an unprecedented scale. (Cyberhaven Research)

Prompt Injection Attacks

Prompt injection has emerged as the #1 vulnerability on the OWASP Top 10 for LLM Applications; NIST reported a >2,000% increase in AI-specific CVEs since 2022, as attackers increasingly hijack AI agents by embedding malicious instructions directly into user inputs or external data sources. (OWASP LLM Top 10, 2025) (NIST NVD)

Defensive AI Strategies

Organizations leveraging AI-powered defenses are gaining a measurable edge; companies using AI and automation in security operations contained breaches 108 days faster and saved an average of $2.22 million more than those without AI-driven defenses.

AI in SOC automation & threat detection

Modern SOCs are drowning in alert fatigue; security teams receive an average of 4,484 alerts per day and spend up to 27% of their time on false positives, but AI-augmented SOCs have demonstrated a 50% reduction in mean time to detect (MTTD) and a 60% drop in manual triage workload, fundamentally reshaping how analysts respond to threats at scale. (Tines SOC Automation Report) (Ponemon Institute)

AI-powered anomaly detection & response

Static rule-based detection is no match for modern adversaries; AI-driven behavioral analytics platforms detect up to 95% of insider threats and unknown malware variants that signature-based tools miss entirely, with Darktrace reporting that its autonomous AI responded to threats in an average of 2 seconds, compared to the industry average human response time of 196 days to identify a breach. (Darktrace Annual Threat Report)

Model Monitoring & Red Teaming

Deploying an AI model without continuous monitoring is a critical security blind spot; Microsoft’s AI Red Team has conducted over 200 dedicated red team operations on AI systems since 2018, and NIST’s AI Risk Management Framework now explicitly mandates adversarial testing, as studies indicate that unmonitored production models degrade in security posture by up to 40% within 6 months of deployment due to data drift and emerging attack patterns. (Microsoft AI Red Team) (NIST AI RMF)

Zero Trust Integration for AI Systems

AI systems are high-value targets that demand the strictest access controls; 86% of security leaders say Zero Trust architecture is critical or very important for securing AI workloads, and organizations that have implemented Zero Trust report 50% fewer successful lateral movement attacks, directly limiting an adversary’s ability to pivot from a compromised AI endpoint into core enterprise infrastructure. (Okta State of Zero Trust Report)

Regulatory & Compliance Focus

The regulatory space for AI security is tightening rapidly; over 25 countries have introduced or enacted AI-specific legislation since 2023, and Gartner predicts that by 2026, more than 50% of large enterprises will face mandatory AI compliance audits, making regulatory alignment not just a legal obligation but a frontline security imperative.

NIST AI Risk Management Framework (AI RMF)

The NIST AI RMF has rapidly become the de facto standard for enterprise AI governance; over 70% of U.S. federal agencies and a growing number of Fortune 500 companies have formally adopted or aligned their AI security programs to the NIST AI RMF since its release in January 2023, with its four core functions; govern, map, measure, and manage-providing the most comprehensive publicly available blueprint for operationalizing AI risk at scale. (NIST AI RMF) (NIST AI RMF Adoption Report)

International alignment: OECD, ISO, EU AI Act

AI security governance is converging globally but at uneven speeds; the EU AI Act, the world’s first comprehensive binding AI regulation, classifies high-risk AI systems across 8 critical sectors and imposes fines of up to €35 million or 7% of global annual turnover for non-compliance, while ISO/IEC 42001, the first international AI management system standard, has already seen adoption inquiries from over 100 countries within its first year of publication. (EU AI Act) (ISO/IEC 42001) (OECD AI Principles)

Mandatory AI Risk Classification, Reporting & Transparency

Regulators are moving beyond voluntary guidelines into enforceable mandates; the EU AI Act requires all high-risk AI systems to maintain detailed technical documentation, undergo conformity assessments, and register in a public EU database before market deployment, while the U.S. Executive Order on AI mandates that developers of foundation models with over 10^26 FLOPs of compute report safety test results directly to the federal government, setting a new global precedent for mandatory AI transparency. (EU AI Act) (White House Executive Order on AI)

Industry-Specific AI Security Insights

No industry is immune to AI-driven threats; the average cost of a cyberattack has risen 15% year-over-year across all sectors, with AI being cited as both the primary attack accelerant and the most promising defensive tool across finance, healthcare, SaaS, and government alike.

| Metric | Data Point | Source |

| Average cost of a financial data breach | $6.08 million | IBM, 2024 |

| AI-generated deepfake fraud losses | $25 billion globally in 2024 | Deloitte, 2024 |

| Increase in AI-powered phishing attacks targeting banks | 1,265% since 2022 | SlashNext, 2024 |

| Financial firms using AI for fraud detection | 70% | Mastercard, 2024 |

| Reduction in false positives using AI fraud detection | Up to 60% | McKinsey, 2024 |

| Algorithmic trading manipulation incidents reported | 38% increase YoY | FINRA, 2024 |

(Deloitte AI Fraud Report) (SlashNext State of Phishing)

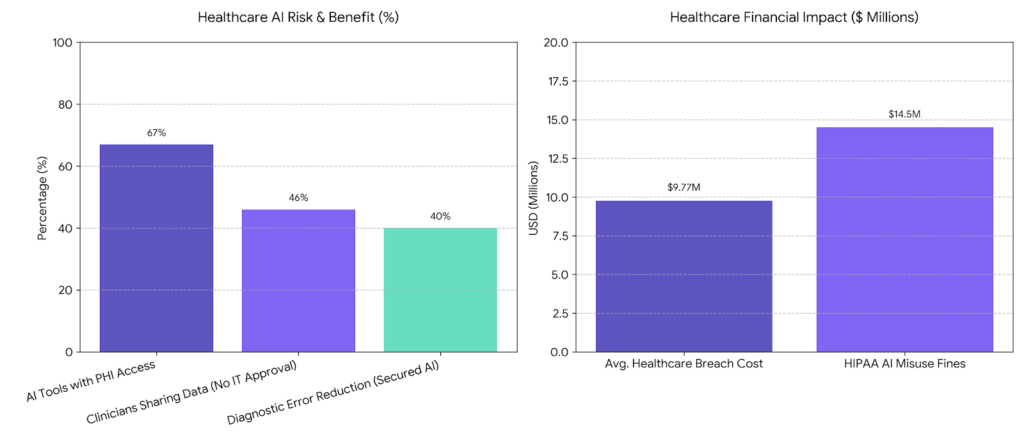

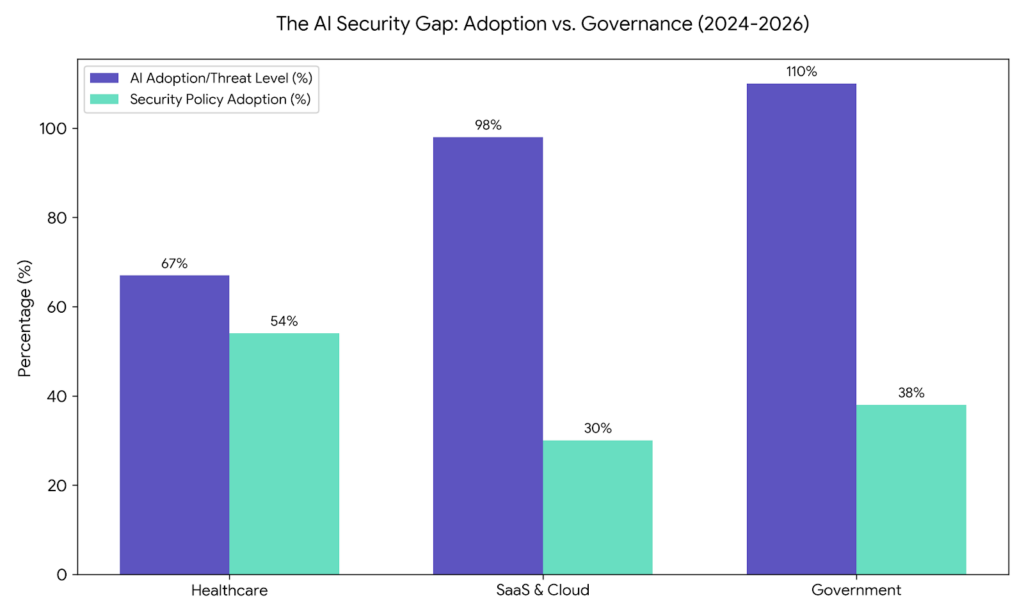

Healthcare: PHI Exposure via AI Tools

Healthcare AI adoption is accelerating faster than its security guardrails; the sector recorded the highest average data breach cost of any industry for the 13th consecutive year at $9.77 million per incident.

| Metric | Data Point | Source |

| Average cost of a healthcare data breach | $9.77 million | IBM |

| Healthcare organizations using AI tools with PHI access | 67% | Ponemon Institute |

| AI-related PHI exposure incidents reported in 2023 | 725 breaches affecting 133M+ records | HHS OCR |

| Clinicians sharing patient data with AI tools without IT approval | 46% | Kaspersky |

| HIPAA penalties related to AI misuse | $14.5 million in fines issued | HHS |

| Reduction in diagnostic error using secured AI tools | Up to 40% | WHO AI in Health Report |

SaaS & Cloud: API & Supply Chain Vulnerabilities

The explosion of SaaS-embedded AI has created a sprawling, largely unaudited attack surface; 98% of organizations use at least one third-party SaaS application with AI capabilities embedded, yet fewer than 30% have a formal AI vendor risk assessment process in place.

(Grip Security SaaS Risk Report)

| Metric | Data Point | Source |

| Percentage of breaches involving cloud assets | 45% | IBM |

| Average number of SaaS apps per enterprise | 371 apps | BetterCloud |

| API-related security incidents in AI platforms | 78% increase YoY | Salt Security |

| AI supply chain attacks via third-party models | 3x increase since 2022 | CrowdStrike |

| Organizations with no AI vendor security policy | 62% | Gartner |

| Cost of a cloud-based AI breach vs. on-premise | 28% higher on average | IBM |

(Salt Security API Security Report) (CrowdStrike Global Threat Report)

Government: AI Security in Critical Infrastructure

Nation-state actors are increasingly weaponizing AI to target critical infrastructure; CISA identified AI-assisted cyberattacks against critical infrastructure as the #1 emerging threat of 2024, with government and defense sectors experiencing a 110% increase in AI-augmented intrusion attempts year-over-year. (CISA Threat Landscape Report)

| Metric | Data Point | Source |

| AI-augmented attacks on critical infrastructure | 110% increase YoY | CISA, 2024 |

| Government agencies with a formal AI security policy | Only 38% | GAO, 2024 |

| Nation-state AI cyberattack incidents reported | 57 major incidents in 2024 | Microsoft MSTIC, 2024 |

| Average dwell time for AI-assisted intrusions in gov networks | 197 days | Mandiant, 2024 |

| U.S. federal AI security budget allocation for 2025 | $3.1 billion | OMB, 2024 |

| Critical infrastructure sectors with AI-specific risk frameworks | Only 4 of 16 sectors | NIST, 2024 |

(Microsoft Digital Defense Report) (Mandiant M-Trends Report)

AI Security Market & Spending Trends

AI security has graduated from a discretionary budget line to a boardroom priority; the global AI security market is projected to grow from $24.3 billion in 2024 to $133.8 billion by 2030, at a CAGR of 21.9%, making it one of the fastest-growing segments in the entire cybersecurity industry. (MarketsandMarkets AI Security Report)

AI Security Investment Growth (2026 – 2028 projections)

Enterprise security budgets are being rapidly reallocated toward AI-native defenses; Gartner forecasts that by 2027, more than 40% of all cybersecurity spending will be directly tied to AI-related capabilities, up from just 8% in 2023, as organizations race to close the widening gap between AI-powered attacks and legacy defense infrastructure. (Gartner Security Spending Forecast) (IDC Worldwide Security Spending Guide)

| Year | Global AI Security Market Size | YoY Growth | Key Investment Driver |

| 2024 | $24.3 billion | Baseline | GenAI threat surface expansion |

| 2025 | $30.1 billion | +23.9% | Regulatory compliance mandates |

| 2026 | $38.2 billion | +26.9% | AI SOC automation adoption |

| 2027 | $52.7 billion | +37.9% | Zero Trust AI integration |

| 2028 | $72.4 billion | +37.4% | AI supply chain security |

| 2030 | $133.8 billion | +86.6% | Full-stack AI security platforms |

(MarketsandMarkets) (Grand View Research)

Adoption of AI-Native Security Platforms

The shift from bolt-on AI features to purpose-built AI-native security platforms is accelerating; CrowdStrike, Microsoft Security, and Palo Alto Networks collectively reported a 47% increase in AI-native platform deployments in 2024, with enterprises citing autonomous threat response, reduced analyst workload, and real-time model monitoring as the top three adoption drivers. (CrowdStrike Annual Report,) (Palo Alto Networks Fiscal Report)

| Metric | Data Point | Source |

| Enterprises using AI-native security platforms | 45% in 2024, up from 18% in 2022 | Gartner |

| Reduction in MTTR with AI-native platforms | Up to 63% faster | CrowdStrike |

| AI-native platform market share vs. legacy SIEM | 34% vs. 66% in 2024; projected 58% vs. 42% by 2027 | IDC |

| Security vendors with embedded GenAI capabilities | 82% of the top 20 vendors | Forrester |

| Average ROI on AI-native security platform investment | 179% over 3 years | Forrester TEI Study |

Enterprise Risk Reduction Through AI Monitoring & Governance

AI monitoring and governance are delivering quantifiable risk reduction outcomes; enterprises with mature AI governance programs report 45% fewer security incidents and resolve breaches 70 days faster than organizations operating without formal AI oversight structures, signaling that governance is no longer a compliance checkbox but a direct performance multiplier for security teams.

(McKinsey State of AI Report) (IBM Cost of a Data Breach Report)

| Metric | Data Point | Source |

| Reduction in AI-related incidents with governance programs | 45% fewer incidents | McKinsey |

| Faster breach resolution with AI monitoring tools | 70 days faster on average | IBM |

| Enterprises with a dedicated AI security governance team | Only 24% in 2024 | Gartner |

| Cost savings from AI-driven continuous model monitoring | $1.76 million per year on average | Ponemon Institute |

| Organizations with real-time AI model risk dashboards | 9% currently; 67% planned by 2026 | Forrester |

| Reduction in regulatory fines with AI compliance monitoring | Up to 38% lower penalties | Deloitte |

(Ponemon Institute Cost of AI Risk Report) (Deloitte AI Governance Survey)

AI Security Forecast (2026 – 2028)

The next three years will define the security posture of the AI era; Gartner predicts that by 2028, AI agents will autonomously execute over 15% of all enterprise security decisions, forcing organizations to rethink threat models, workforce structures, regulatory obligations, and financial risk transfer strategies from the ground up. (Gartner Emerging Tech Forecast)

Autonomous AI Agents as New Attack Vectors

Autonomous AI agents are rapidly becoming the most consequential unsecured asset in the enterprise; OpenAI and Google DeepMind both flagged agentic AI systems as their #1 near-term safety concern in 2024, with researchers demonstrating that compromised AI agents can exfiltrate data, escalate privileges, and laterally traverse networks with zero human interaction, a threat vector that 80% of current enterprise security stacks are entirely unprepared to detect. (OpenAI Safety Report,) (Google DeepMind Safety Research)

Rise of AI Red-Teaming & Incident Simulation Roles

AI red-teaming is transitioning from a niche research discipline into a mainstream enterprise security function; the U.S. Bureau of Labor Statistics projects a 35% surge in demand for adversarial AI testing roles by 2028, and following the White House Executive Order on AI, all major U.S. federal contractors are now required to conduct pre-deployment red team evaluations, catalyzing an entirely new professional category that LinkedIn reported as the fastest-growing cybersecurity job title of 2026. (U.S. BLS Occupational Outlook) (LinkedIn Jobs on the Rise)

Regulatory Enforcement & Global Compliance Impact

Regulatory grace periods are ending and enforcement actions are beginning; the EU AI Act’s high-risk provisions become fully enforceable by August 2026, and the European Data Protection Board issued €1.2 billion in GDPR fines tied to AI data processing violations in 2023 alone, signaling that regulators globally are prepared to impose material financial consequences on organizations that treat AI compliance as optional. (EU AI Act Timeline) (EDPB Annual Report)

AI Risk Insurance Adoption Trends

AI risk is rapidly becoming an insurable and increasingly mandatory line item on enterprise balance sheets; the global AI liability insurance market is projected to reach $4.3 billion by 2028, and Munich Re reported that over 65% of new cyber insurance policies issued in 2024 now include specific AI risk exclusion clauses, meaning organizations without demonstrable AI governance frameworks face either coverage denial or prohibitively expensive premiums. (Munich Re Cyber Insurance Report) (Allied Market Research)

Conclusion

The data makes one thing clear: AI security is no longer a future problem. Breaches are costlier, attack surfaces are wider, and most security teams are still catching up. Organizations that treat AI governance as optional will pay for it. In regulatory fines, breach costs, and lost trust. The professionals who build real AI security skills now won’t just be better at their jobs. They’ll be the ones running the function when everyone else is scrambling.

If you’re ready to build those skills, the Certified AI Security Professional (CAISP) course gives you the technical depth and practical coverage to get there. No fluff. Just the skills the 2026 threat environment actually demands.

Certified AI Security Professional

Secure AI systems: OWASP LLM Top 10, MITRE ATLAS & hands-on labs.

FAQs

The top AI security risks in 2026 include prompt injection attacks, autonomous AI agent exploitation, shadow AI usage, model poisoning, and AI supply chain vulnerabilities; Gartner identifies AI-specific threats as the #1 emerging risk category for enterprises, with generative AI expanding the attack surface faster than most security teams can respond.

AI-related breaches are among the most expensive security incidents on record; the global average cost of a data breach reached $4.88 million in 2024, with breaches involving AI systems or GenAI tools carrying a premium cost due to extended dwell times, regulatory penalties, and reputational damage.

Enterprises mitigate GenAI data leakage through a combination of AI usage policies, data loss prevention (DLP) tools integrated with GenAI platforms, employee training, and output monitoring; organizations with formal GenAI governance policies reduce data leakage incidents by up to 46% compared to those with no controls in place.

Prompt injection is an attack where malicious instructions are embedded into inputs or external data to hijack an AI model’s behavior, bypassing its safety guardrails entirely; it ranks as the #1 vulnerability in the OWASP Top 10 for LLM Applications and is particularly dangerous in agentic AI systems where a single successful injection can trigger unauthorized actions, data exfiltration, or full system compromise with no human in the loop.

AI supercharges SOC operations by automating alert triage, correlating threat signals across millions of events in real time, and enabling autonomous incident response; AI-augmented SOCs detect threats 50% faster and reduce analyst workload by up to 60%, allowing security teams to shift focus from reactive alert management to proactive threat hunting and strategic defense.